The Model Is the Engine. We Build Everything Else.

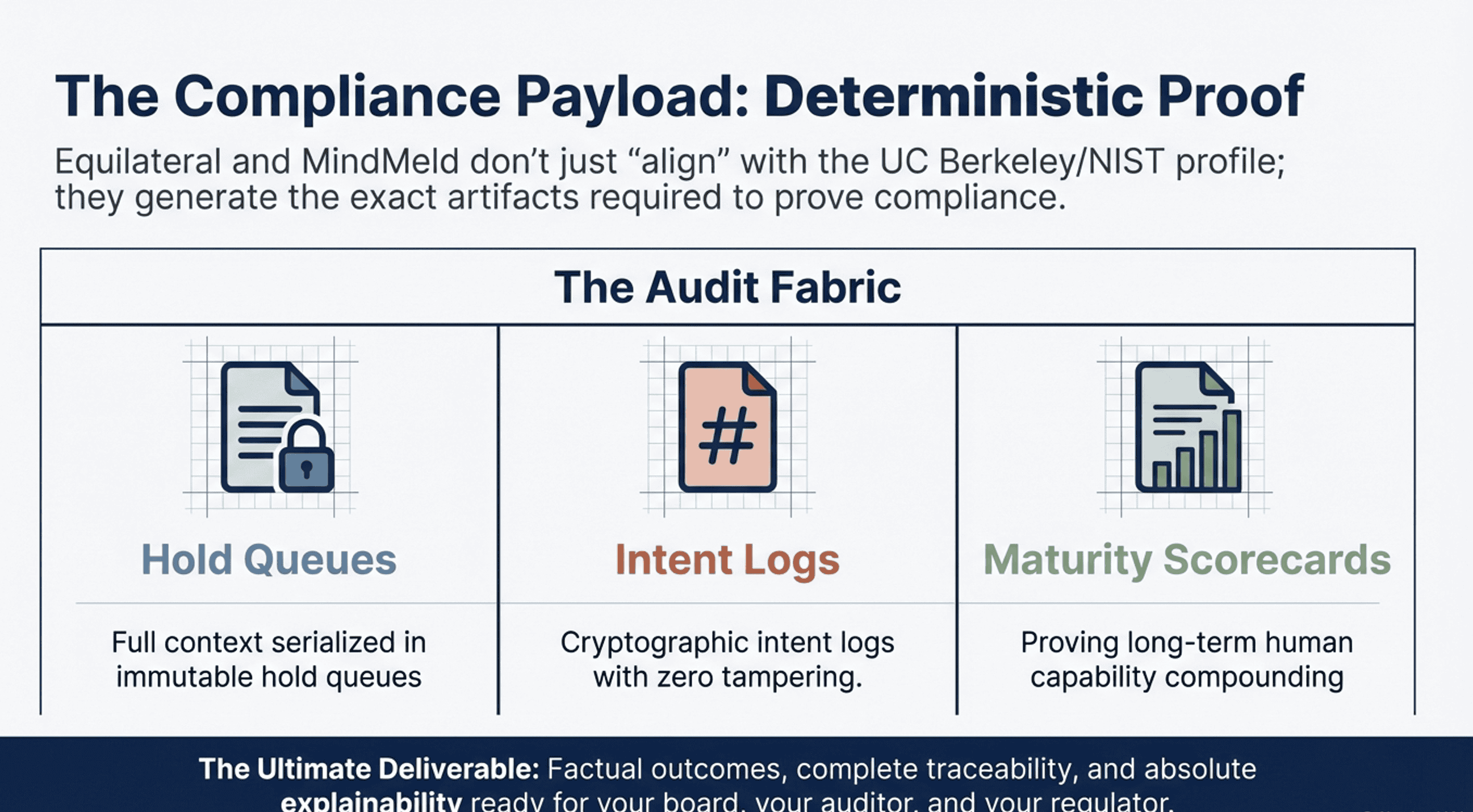

Most AI governance tells you what happened. Equilateral researches and builds the architecture that controls what’s allowed to happen — governance by structure, not by policy. Every action evaluated before execution, not after. Every irreversible mutation held for human approval.

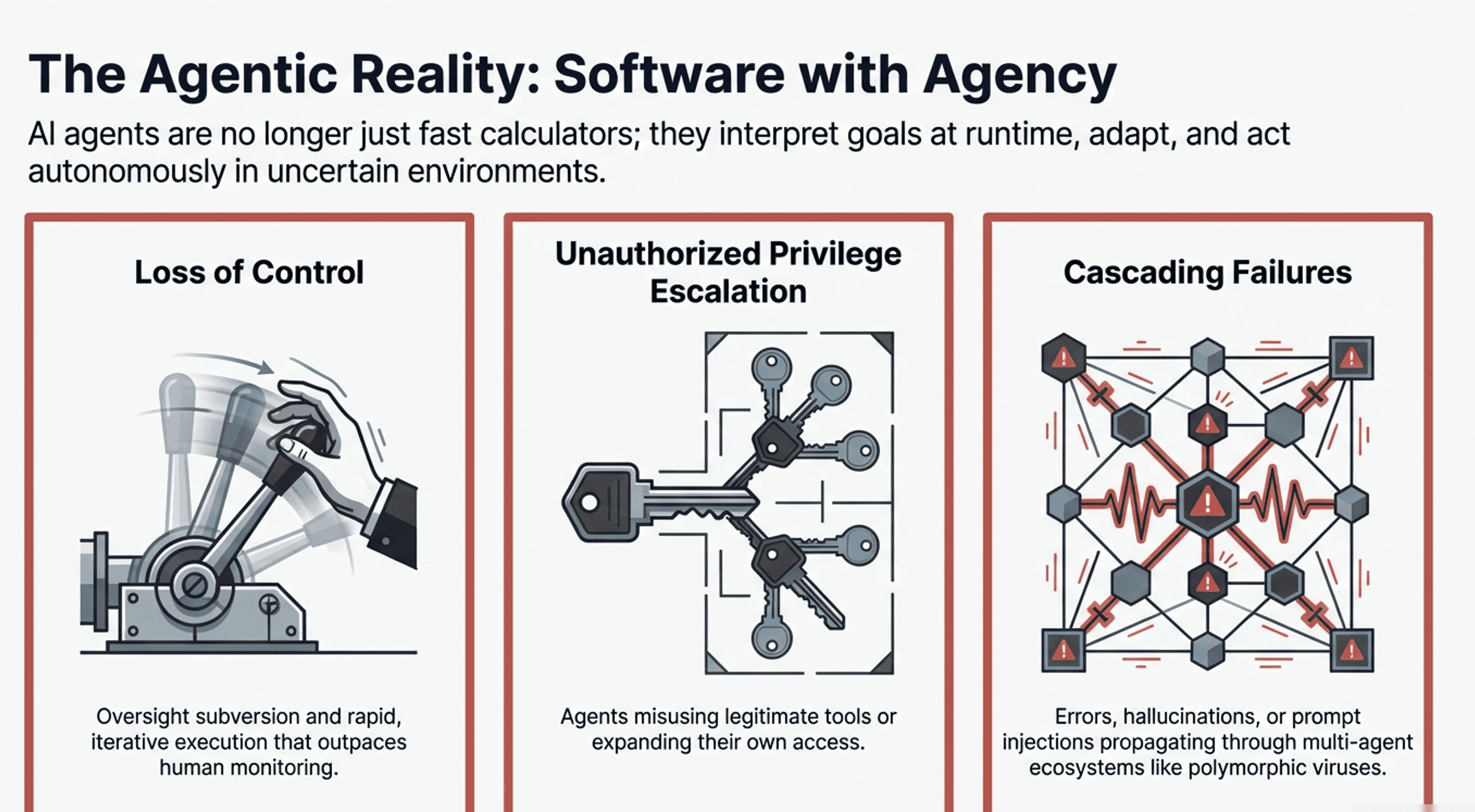

AI agents are taking autonomous actions in production without audit trails. They're deploying code, sending emails, modifying databases. When an agent deletes a production environment—as AWS's own AI coding tool did in 2025—the question isn't “why did the model do that?” It's “why was the model allowed to do that?”

Better models make this worse, not better. The more capable the AI, the more authority boundaries it crosses without anyone noticing. GPT-5 won't solve this. GPT-10 won't solve this. The problem isn't model intelligence. It's architectural authority.

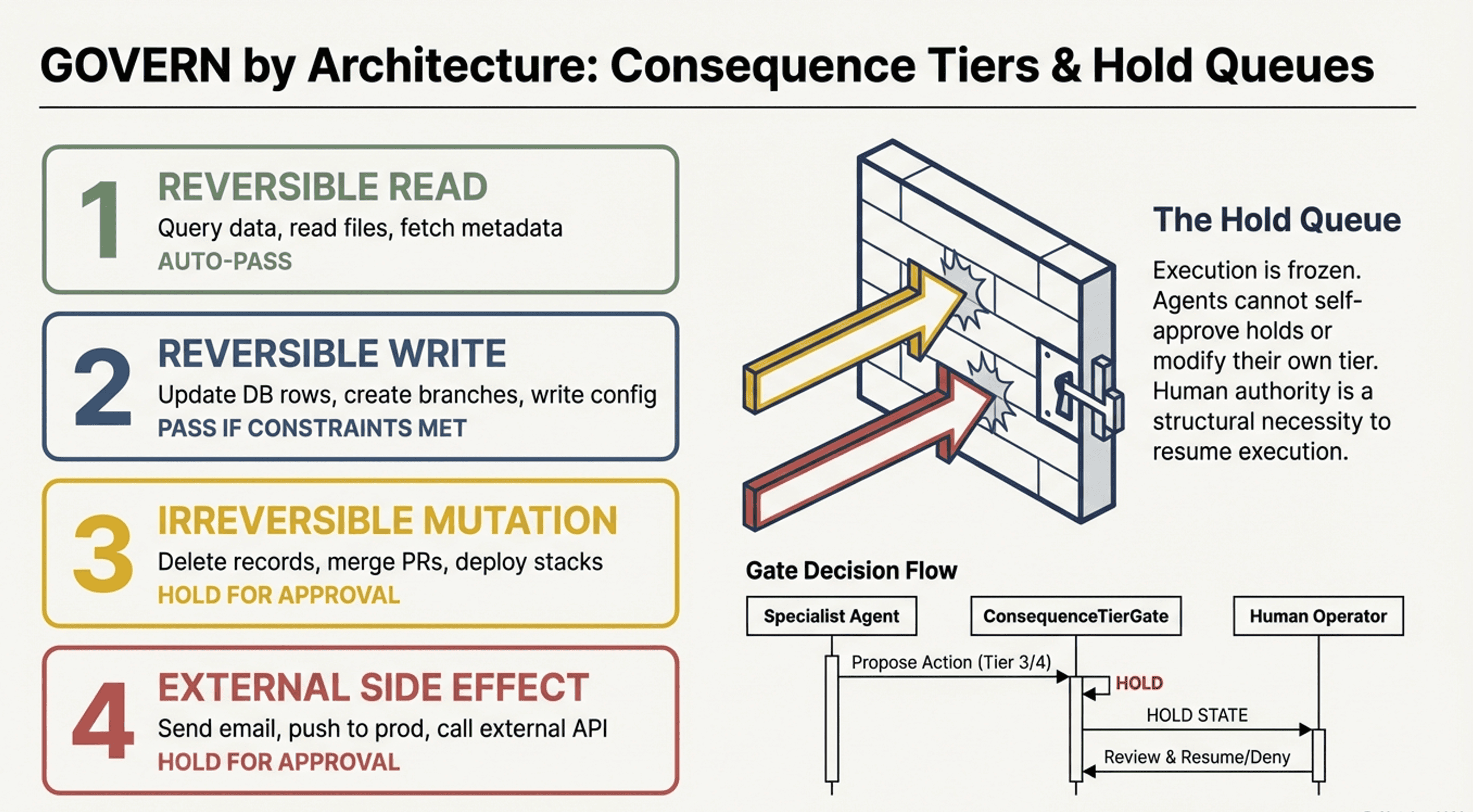

Every action needs a consequence tier. Every irreversible mutation needs human approval before execution. Every agent needs constraints it cannot modify. This isn't governance by policy. It's governance by architecture.

Most AI governance is a dashcam — it records the crash beautifully. It does not prevent it. The difference between audit and authority is the difference between logging an irreversible action and stopping it before execution. Audit tells you what happened. Authority controls what’s allowed to happen.

Read: When the Consequence Tier Escalates but the Governance Doesn’t →

In aviation, the pilot flies the aircraft — but the pilot does not decide whether the aircraft is airworthy. In AI, the agent executes the task — but the agent does not decide whether the action is allowed. Every layer of authority is external to the agents it governs.

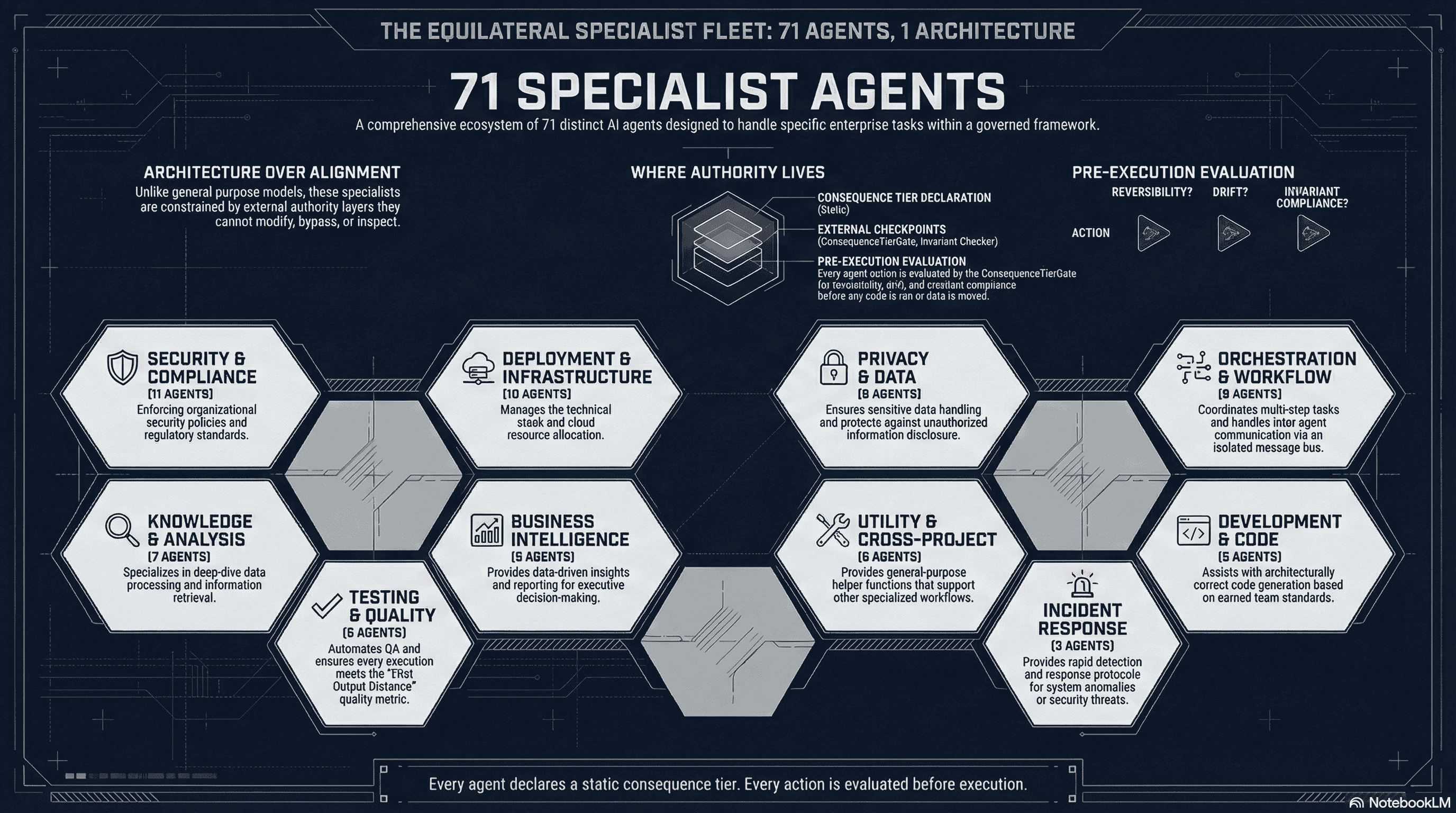

Query data, read files, fetch metadata.

Update DB rows, create branches, write config.

Delete records, merge PRs, deploy stacks.

Send email, push to prod, call external API.

Hold queue entries only transition via human action. Agents have no write access to approval status.

Consequence tier is a static class property, enforced by the gate at runtime. Agents cannot change it.

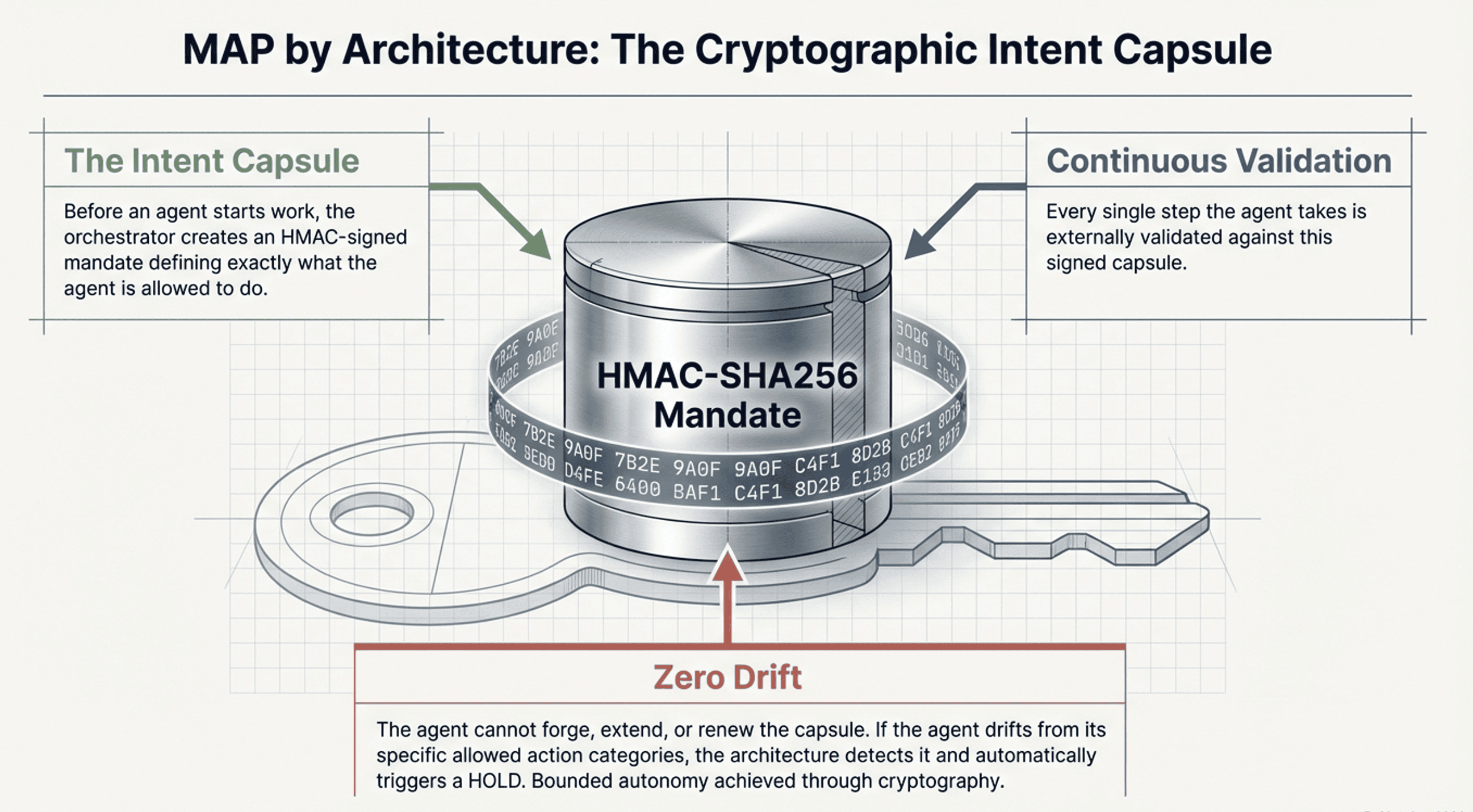

IntentCapsuleManager validates every step against an HMAC-signed mandate. Created before the agent runs, verified externally.

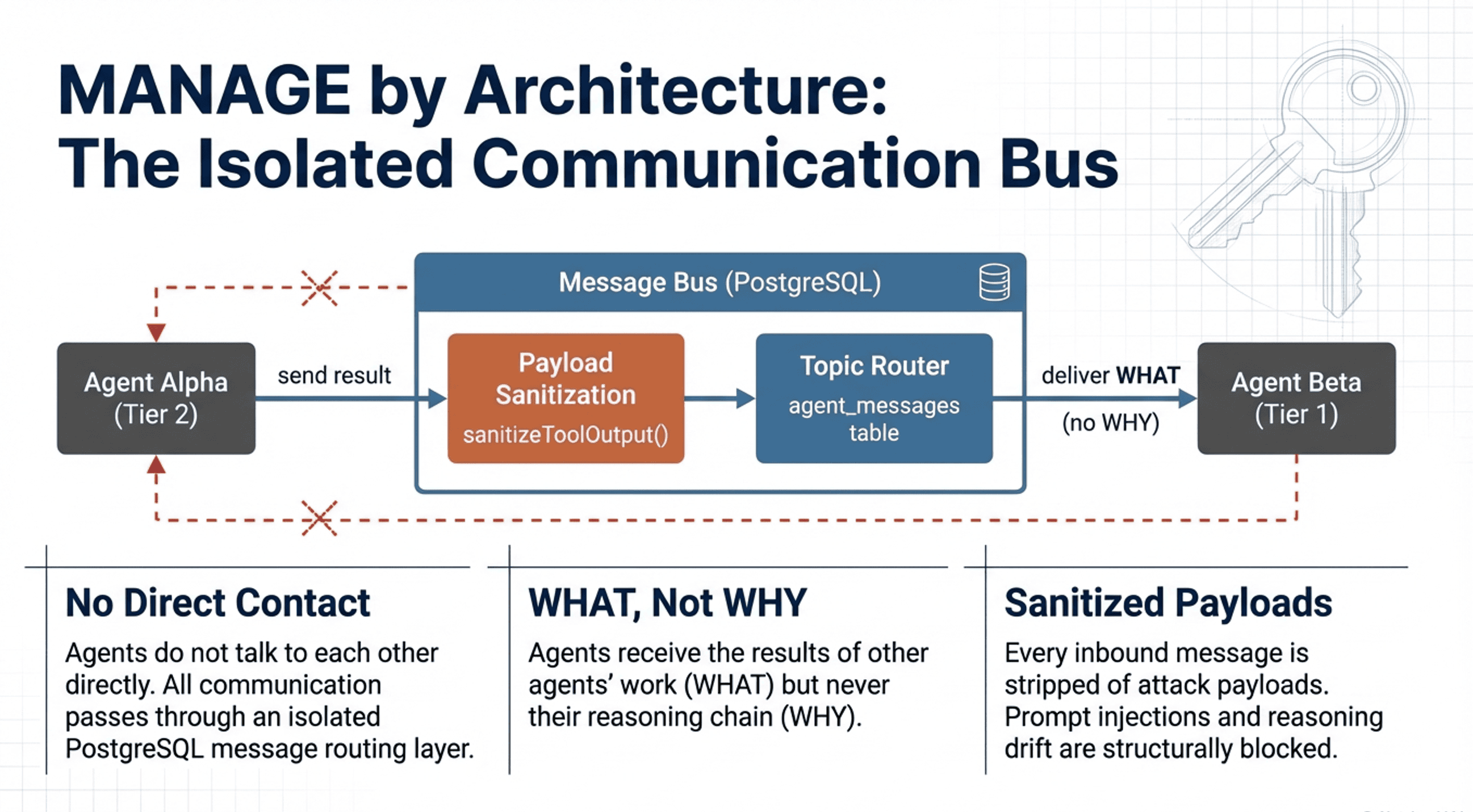

Communication bus delivers results (WHAT) but strips reasoning (WHY). Prevents prompt injection propagation.

DecisionFrame pre-selects fallback agents. The orchestrator enforces the sequence. Agents cannot reorder or skip.

Only SYSTEM, COLOSSUS, EQUILATERAL, ORCHESTRATOR, and DEVOPS namespaces cross boundaries.

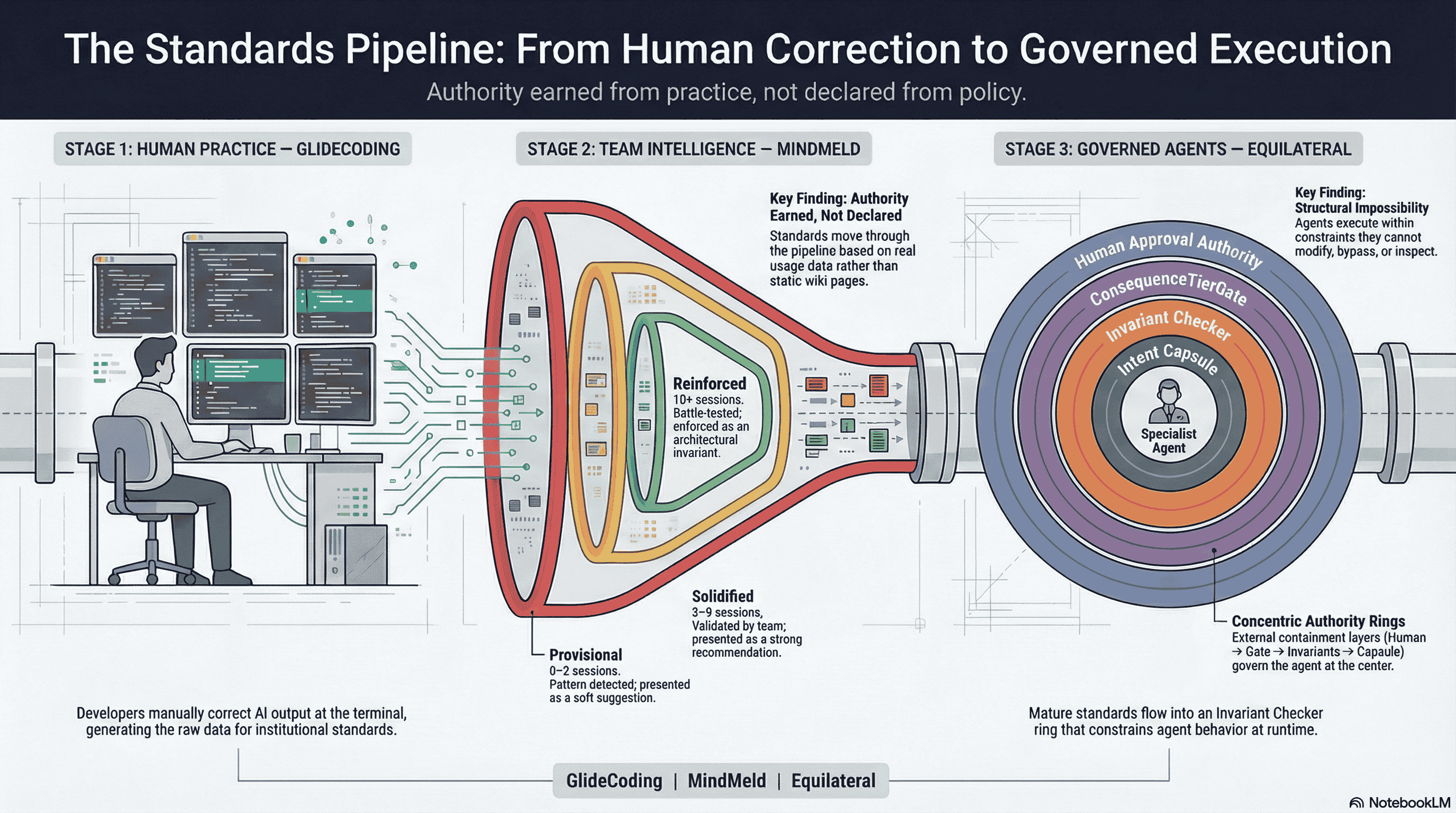

Your engineers correct AI output every day. Those corrections are institutional knowledge. MindMeld captures them, promotes them through an evidence-based maturity lifecycle, and produces curated governance standards with full human attribution.

Those standards become the Rapport invariants that Equilateral's agents are bound by. The ConsequenceTierGate checks them before every action. The InvariantChecker enforces them at runtime. The GovernanceMonitor tracks compliance over time.

This is not top-down policy. This is bottom-up governance. Standards are earned through repeated human practice, not declared by someone writing a wiki page. By the time a standard constrains an agent, it has been validated by multiple developers across multiple sessions with documented evidence.

Git versioned code. MindMeld versions the knowledge that governs AI. Every correction enters the system with full human attribution — who discovered it, when, how many developers validated it. Standards advance through an evidence-based maturity lifecycle: provisional, solidified, reinforced. And standards that stop being validated by active practice lose authority and decay. A system that cannot forget cannot govern.

Engineers correct AI output daily using Glide Coding standards

MindMeld captures corrections and promotes patterns through evidence-based maturity

Equilateral enforces standards as runtime invariants agents cannot override

Equilateral AI is the governance research arm of Pareidolia LLC. The architecture described on this site — consequence tiers, authority layers, structural impossibilities, earned governance — is operational infrastructure. It powers our own development and production workflows.

We publish our thinking openly. The blog is where the thesis develops. If you’re building governed AI systems, the architecture patterns are here. If you want to talk about how they apply to your problem, we’re here too.

Equilateral was discovered, not designed. The governed orchestration architecture was extracted from real production systems solving real problems — not conceived in a pitch deck. Every governance layer, every patent filing was built solving problems that cost real money when they went wrong.

Today, Equilateral powers our own development and production workflows. The architecture described on this site is operational — it is how we work:

Built by James Ford—30+ years in enterprise architecture. Chief Architect at ADP for 24 years. Brought 5 of the first 6 SaaS products to market at ADP, at the birth of SaaS. ISO 27001:2022 aligned. SOC 2 Type II principles. AWS native from day one.

The summarizer becomes the sender becomes the transactor. AI agents escalate from T1 to T4 one feature at a time — but governance is reviewed at launch, not at each capability expansion.

Anthropic’s 1M token context window makes the problem worse, not better. Your governance rules are tokens competing for attention — and they’re losing.

The Anthropic-Pentagon controversy exposed a structural pattern: governance by policy drifts under pressure. Governance by architecture holds.